Could Social Media Bans Trigger a Marketing Revolution You Didn’t See Coming?

Ever find yourself doom-scrolling late into the night, soaking up every last bit of the world’s chaos with nothing but your thumb for company? Yeah, me too. And amidst all that noise—the endless political dramas, climate crisis horror stories, and influencers flaunting their perfect lives—there’s a creeping question that lawmakers and parents alike are starting to shout louder: Should we slam the brakes on social media access for kids? Turns out, 68% of folks agree, especially those juggling young children. For us marketers, this isn’t just another headline. These social media bans—already rolling out across the globe—are shaking up how we connect with audiences, navigate brand safety, and race through compliance mazes that feel more like obstacle courses. As the digital world morphs into a patchwork quilt of rules and restrictions, how do we keep our brands relevant and razor-sharp? Buckle up. We’re diving deep into the latest on these bans and what it means for your social strategy moving forward. LEARN MORE.

Conditions online can be harrowing. All of us are susceptible to doom-scrolling—absorbing the intensity of global political, humanitarian and climate crises with the flick of a thumb. Not to mention comparing our lives to influencers’ glossy content or our acquaintances’ highlight reels, letting unhealthy comparisons rear their ugly head.

With such dark sides to social, lawmakers worldwide have started to wonder: Do they have a responsibility to enact social media bans that protect children? Consumers think so, with 68% saying they support such bans, according to Sprout Social’s Q1 2026 Pulse Survey. Those with young children are the most likely to rally behind them.

For social media marketers, who already face a number of internal and external challenges in the course of doing their jobs, this means even more stress and brand safety risks. Many countries have already implemented, passed or strongly considered a social media ban.

This new legislation may make it more difficult to reach certain audiences, publish content efficiently, and stay compliant with ever-changing rule books and country-specific laws. Zooming out, as the world’s economies become more connected, it’s going to prove challenging for global brands to comply with so many disparate policies and maintain relevance.

To make it easier to navigate, we’ve laid out the facts we have so far about current and proposed social media bans. We’ve also asked global marketers for their takes on how social teams should adjust their workflows and content strategies as regulations around social media become more common.

Please note the information provided in this article does not, and is not intended to, constitute formal legal advice. Please review our full disclaimer before reading any further.

Current social media bans

While the state of social media bans is always changing, here are the pieces of legislation that have been passed at the time of publishing. This encompasses which networks are included, proposed enactment dates if not already in place and how the rules would be enforced.

The Australian social media ban

In late 2024, the Australian government passed one of the strictest social media laws in the world, banning all children younger than 16 from using social or creating accounts. The Australian social media ban applies to TikTok, Facebook, Snapchat, Reddit, X, Instagram and YouTube. It puts the onus of keeping those under 16 from using social on the networks themselves, and failure to do so would result in fines up to $50 million AUD. The law tasks networks with verifying users’ ages through ID, behavioral signals and biometrics (which has spurred debates about privacy).

The Australian government reported 4.7 million under-16 accounts were deactivated, removed or restricted within three days of the ban going into effect on December 10, 2025. But there are mixed views on whether or not the ban has been successful at limiting teens’ access.

Regardless, as social networks and lawmakers continue implementing systems to enforce the age restriction, brands should adjust their Australian go-to-market strategy accordingly.

The Indonesian social media ban

In late March 2026, Indonesia officially passed and implemented a social media ban for users under 16. These users will be blocked from creating or maintaining platforms on Facebook, Instagram, TikTok, YouTube, Threads, X, Big Live and Roblox, though the enforcement and account deactivation “will happen gradually,” according to the Indonesian government. The networks must comply with age verification standards set by the government (though they are still unclear) or face hefty fines and potential nationwide bans.

The Malaysian social media ban

As part of Malaysia’s Online Safety Act that went into effect on January 1, 2026, the minimum age to use social media in the country has been raised to 16. Like Australia, the responsibility falls on the shoulders of social networks, who are expected to implement the restrictions with electronic know-your-consumer (eKYC) checks. These involve users providing their official IDs—like passports or MyDigital ID—to verify age at registration. The ban applies to WhatsApp, Telegram, Facebook, Instagram, TikTok and YouTube.

The French social media ban

In early 2026, French lawmakers in the lower house approved a bill banning social media for children under 15. The bill was adopted by the Senate in March 2026. The ban is expected to go into effect this September, at the beginning of the French school year. However, the Senate introduced amendments that could delay adoption.

While the networks impacted by the ban will technically be decided by France’s media authority, the architect of the bill said the law will be modeled after Australia’s ban. Snapchat, Instagram, TikTok and X are all expected to be affected, though there could be additional platforms included.

The European Commission—the executive branch of the European Union (EU)—is set to enforce the ban. It isn’t currently clear how social platforms are expected to implement the ban in compliance with the accuracy and privacy standards of the EU.

US social media bans

While the brief US TikTok ban regarding data security was officially overturned thanks to the deal between ByteDance and American investors, other state-wide bans have been implemented to address minors using social media across other networks.

| State | Who does the ban apply to? | Which networks are impacted? | How is the ban enforced? |

|---|---|---|---|

| Florida | Users under 14 are not allowed to create social media accounts. Users 14-15 need parental consent. | Facebook, Instagram, Snapchat and YouTube | Networks must use third-party age verification software, and face fines up to $50,000 per violation. |

| Mississippi | Users under 18 need parental consent to create social media accounts. “Harmful” material must also be blocked from minors. | Facebook, Instagram, Snapchat, YouTube and NextDoor | Users must verify their age using government-issued ID, and networks must implement strict age verification. Platforms who fail to comply face $10,000 in penalties per infraction. |

| Tennessee | Users under 18 need parental consent to create social media accounts. Children under 14 cannot appear in monetized videos, and children 14-18 must be compensated for appearing frequently in content. | Facebook, Instagram, Snapchat, YouTube, TikTok and X | Networks must verify a new user’s age, obtain parental consent before allowing minors to create accounts, and allow parents to supervise, modify, and deactivate their child’s account. |

| Virginia | Users under 16 are only allowed to spend one hour per day on each social media platform. | Facebook, Instagram, Snapchat, YouTube, TikTok, X and Reddit | Networks must use “commercially reasonable methods” to determine a user’s age and restrict access. |

To date, bans in states like Arkansas, Georgia, Louisiana and Ohio have been struck down in the courts for vague terms and violating constitutional rights (including freedom of speech).

Other states, including California, Kentucky, Massachusetts and North Carolina, are currently attempting to pass laws that prevent minors from using social media. Some prohibit use without parental consent, while others restrict access outright. New legislative action follows recent verdicts against Google and Meta.

Proposed social media bans to have on your radar

Meanwhile, global legislators are strongly considering social media bans—with some already crafting bills. Here’s a high-level overview of this proposed legislation, which is now impacting almost every region worldwide.

Proposed social media bans in APAC

Following Indonesia and Australia’s leads, other APAC nations are considering social media bans including:

| Country | Who does the ban apply to? | Which networks are impacted? | How will the ban be enforced? |

|---|---|---|---|

| New Zealand | Users under 16 would no longer be able to access social media or create accounts. | Major networks with user-generated content. | No agency or age verification technology has been designated yet. |

| Philippines | Users under 16 would no longer be able to access social media or create accounts. | Facebook, Instagram, TikTok, X and YouTube | The responsibility will fall on social media networks. |

Proposed social media bans in Europe

At the EU level, the 27 member states are free to set their own age limits for social media use, and many are working on legislation. A coalition has also been formed to unify an approach throughout the continent, and a new EU age verification app suggests the European Commission supports a bloc-wide strategy. While this situation remains fluid, some member states and other European countries are moving forward with their own legislation:

| Country | Who does the ban apply to? | Which networks are impacted? | How will the ban be enforced? |

|---|---|---|---|

| Austria | Users under 14 would no longer be able to access social media or create accounts. | Targets major platforms, with no official list until June 2026. | More details will become available after the entire plan is drafted. |

| Denmark | Users under 15 would no longer be able to access social media or create accounts. | No details regarding which networks will be impacted yet. | Age verification applications enforced by the European Commission. |

| Greece | Users under 15 would no longer be able to access social media or create accounts. | Facebook, Instagram, Snapchat and TikTok | Networks must verify user ages via the Greek “Kids Wallet” app, and face 6% of global revenue fines for failing to comply. |

| Italy | Users under 15 would no longer be able to access social media or create accounts. | The exact list of networks hasn’t been verified yet. | A “mini-national portfolio” integrated with the European Union’s digital identity system to validate the user’s real age. |

| Norway | Users under 15 would no longer be able to access social media or create accounts. | Awaiting to determine what constitutes a “social media platform” from the nation’s public consultation. | Plans for enforcing the ban haven’t been released. |

| Poland | Users under 15 would no longer be able to access social media or create accounts. | The exact list of networks hasn’t been verified yet. | More details will become available after the draft law is passed, but the responsibility is expected to fall on social platforms. |

| Portugal | Users 13 and under would no longer be able to access social media or create accounts. Users 13-16 would only be able to do so with parental consent. | The exact list of networks hasn’t been verified yet. | The national Digital Mobile Key system, which social networks must comply with, will be used to complete age verification. Failure to comply will result in fines up to 2% of global revenue. |

| Slovenia | Users under 15 would no longer be able to access social media or create accounts. | Major networks where user-generated content is shared, including Instagram, Snapchat and TikTok. | Implemented via age-verification technology in cooperation with the Ministry of Digital Transformation. |

| UK | Users under a certain age would no longer be able to access social media or create accounts. | Instagram, Snapchat and TikTok | Implementation details will be announced if the law proceeds after the six-week pilot ends in May 2026. |

Proposed social media bans in LATAM

Nations in Latin America are also mulling over proposed social media bans.

| Country | Who does the ban apply to? | Which networks are impacted? | How will the ban be enforced? |

|---|---|---|---|

| Brazil | Users under 16 would be required to link social media accounts to parental guardians. | The exact list of networks hasn’t been verified yet. | Social media networks are required to “implement effective age verification mechanisms.” Failure to do so could result in fines nearing $10 million. |

| Ecuador | Users under 15 would no longer be able to access social media or create accounts. | Any social media networks that “allow users to create personal accounts, share content publicly, exchange messages and establish visible connections with other users.” | Plans for enforcing the ban haven’t been released, but the responsibility is expected to fall on social media networks. |

Proposed social media bans in SWANA

Countries in North Africa and Southwest Asia have pursued bans of their own as well.

| Country | Who does the ban apply to? | Which networks are impacted? | How will the ban be enforced? |

|---|---|---|---|

| Turkey | Users under 15 would no longer be able to access social media or create accounts. | Networks including Facebook, Instagram, TikTok and YouTube | Social media networks are required to install age-verification systems and parental controls. |

| UAE | Users under 13 would require parental consent to have their data collected, processed, published or shared. Users under 16 would be blocked from viewing high-risk content. | All digital platforms, including social media networks | Social media networks must implement technology to filter content and user experience by age demographic, or face fines up to $32 million. |

Networks are self-regulating in response to public concern

Social media networks are responding to this legislation by updating their platform functionality. Per the Q1 2026 Sprout Pulse Survey, consumers were more likely to support networks implementing parental consent measures and age verification technology over outright bans. In lockstep with consumer preferences, many major platforms now give parents more control over their children’s experience online, including:

- Meta, the parent company of Facebook and Instagram, rolled out “Teen Accounts” to automatically censor age-inappropriate content and provide parents with more notifications related to their child’s behavior on the app. The accounts also allow parents to set scrolling time limits and have visibility into who their child can message with.

- Snapchat offers the Family Center tool so parents can see who their children are talking to and how much time they’re spending on the platform. The functionality allows guardians to restrict sensitive content, report suspicious users, limit access to the AI chatbot and view their child’s location.

- WhatsApp introduced parent-managed accounts for users under 13 and their parents. With the accounts, parents can choose who can contact their child, manage their child’s privacy settings and supervise their child’s activity.

- YouTube created the dedicated YouTube Kids app to provide a curated feed with a family-friendly experience in mind. The app also offers parents and caregivers oversight and stricter control over the content they consume.

Other networks use AI-driven technology to restrict content and protect children from unsafe behavior.

- Discord launched the Teen Default Experience, assigning all global users of the platform to an experience appropriate for teens. To unblur adult content, users must be “age-assured as an adult” via facial age estimation technology and the age interference model.

- TikTok uses AI to remove accounts of users under 13 throughout Europe. It uses that same technology to set all under-16 accounts to private and restricts content related to body image or other mature themes for those users.

How brands can prepare for social media bans (and changing consumer behavior)

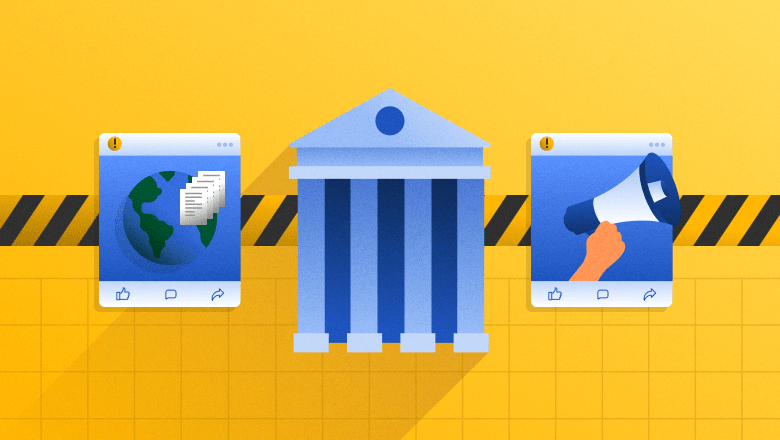

Even if you aren’t operating within the jurisdiction of a social media ban, our Q1 2026 Pulse Survey found that 15% of Gen Z consumers are disconnecting and reducing screen time for their mental health. Which begs the question: With new legislation and consumer behavior shifts on the horizon, how should brands prepare?

Channel experimentation

Now is the time to start experimenting on other networks and emerging channels.

According to Sam Morgan-Smith, Head of Social at the UK-based PR agency The Romans, “Platforms like TikTok, Snapchat and Instagram are still culturally powerful, but their viability for reaching younger users is becoming legally precarious, particularly from an organic perspective. Brands need to audit their media mix through a lens of future-proofing—prioritizing platforms that can guarantee verified reach and are adapting to age-gating protocols. Think: YouTube Kids, Discord communities with mod oversight and emerging youth-safe ecosystems within the gaming space (i.e., Fortnite). In short: where you spend has to match where your audience legally can be—and that line is moving fast.”

Tiffany Sayers, co-founder at Australian agency Loft Social, agrees. “Younger consumers are already showing signs of platform fatigue, or at the very least, platform fluidity. Gen Z isn’t loyal to one app; they’re loyal to the experience. So rather than anchoring investment purely by platform, brands should evaluate content fit, consumption patterns and community behaviour. Ask: where is attention moving, not what’s trending today. That means doubling down on community building over broadcast, and treating platforms like TikTok less as ‘social networks’ and more as ‘discovery engines.’ Platform agility is the new brand safety, I reckon.”

Age-aligned content

While the days of trying to reach everyone on social are long behind us, teams can’t only rely on the algorithms to deliver their content to the right people. Legislation headwinds will require brands to be more audience-specific when crafting their content.

Morgan-Smith describes this shift: “The days of blanket posting (or ‘throwing confetti’ as I refer to it) and trusting the algorithm are over. Brands will need to get far more strategic. Content needs to be age-aware and legally defensible.”

Sayers adds, “If underage users are legislatively restricted or platforms are penalized for blurred lines, we’ll need more rigour in how brands brief talent, capture first-party data and define success.”

Morgan-Smith and Sayers outlined what that could look like:

- Organic content: Instead of speaking directly to teens, brands will shift their tone to reach parents, educators or older siblings. Brands should also stay vigilant to emerging requirements, like network-enforced content tags for specific age groups.

- Paid social: Marketers should expect to see increased costs to secure audience reach and tighter targeting, while dealing with a smaller youth inventory. Brands will need to double down on transparency and age-tracking tech to remain compliant. Especially if operating across multiple jurisdictions.

- Influencer marketing: All influencers and creators with young audiences will need to comply with robust age-verification protocols, guardian approvals and platform tracking. This could mean more family creators and less attention paid to follower count alone. It will also influence how briefs are written, with more emphasis on co-creating briefs that ensure brand safety and resonance.

Refined brand safety protocols

While many social teams already have some version of brand safety guidelines, their approach needs to get more sophisticated to not only meet network requirements but to comply with legal regulations and show up ethically.

Sayers explains, “We need to move beyond relying solely on platform policies and start embedding internal frameworks. Outside of the obvious—like disclosure and creator education—this includes tighter briefs with age-appropriate messaging, stronger vetting processes, content archiving and moderation protocols, and legal-reviewed guidelines for giveaways, comments and CTAs (more than just captions). Brands need to build processes for long-term digital governance, even if it hurts their vanity metrics. It requires long-term vision, not short-sightedness.”

Morgan-Smith agrees, adding, “Compliance can’t be a bolt-on—it needs to be built into the workflow. That includes governance playbooks (what’s permissible by age, region and platform), data protocols for consent data, age segmentation and targeting rules, and tools that adhere to emerging publishing rules (tagging, audit trails, etc.). Above all, training is fundamental. Not just for social, but all digital-facing departments need regular updates on global legislation, like Children’s Online Privacy Protection Act (COPPA) in the US, the UK Online Safety Act and Australia’s age-verification laws to avoid accidental noncompliance.”

Real-world immersion

Though younger consumers are known for operating in a digital ecosystem, you shouldn’t overlook the opportunity IRL activations offer to complement your online efforts. Especially as operating on organic social becomes more complex.

Sayers advises: “We’re seeing a strong shift back to IRL-led storytelling: micro-events, brand installations, peer-to-peer word of mouth and influencer content that lives beyond a grid. UGC and ambassador-led content seeded via paid media also continues to perform even if organic reach declines.”

Morgan-Smith adds, “As direct social access becomes a little more restricted, the strategic (and smart) pivot is toward hybrid ecosystems that bridge digital influence and real-world immersion. Online, brands should explore gaming integrations, instant messaging and youth-safe content platforms. While in real life, we’re seeing a creative resurgence—including university activations, experience-led marketing and retail theater. This isn’t about abandoning digital—it’s about recalibrating to environments where attention, access and trust intersect.”

Navigating social media bans requires agility: Social media marketers’ superpower

“This is an evolution, not an erosion. Social media is maturing. Compliance challenges signal legitimacy, not demise. It’s moving from the Wild West to a regulated media environment—like TV did decades ago. Rather than abandoning social, this is the moment to reimagine it. Invest in multi-channel ecosystems, build first-party relationships with younger consumers (with consent) and champion network accountability so you can influence how these spaces grow,” says Morgan-Smith.

Sayers adds, “Social still delivers ROI at every stage of the funnel. It just requires smarter systems and more intentional content now. Show leaders that ethical, compliant social is not only possible but powerful. It’s about shifting the conversation with your leaders from ‘how many likes’ to ‘how many hearts and minds.’”

This is just one piece of the larger brand safety picture. Download our comprehensive checklist, which helps you address risks from AI-generated threats to influencer partnerships.

Post Comment