Inside BlockRank: Google’s Breakthrough AI That Could Revolutionize Information Ranking Forever

Ever wondered why so many AI models slow down to a crawl when they try to rank tons of documents at once? It turns out, the devil is in the details—specifically, in how these models “pay attention” to all that info. Google DeepMind just dropped a game-changer called BlockRank, a clever solution designed to speed up this grueling process without sacrificing quality. Instead of the usual all-to-all word comparison that tanks performance, BlockRank smartly lets documents focus on themselves while letting the query do the heavy lifting of comparison. The result? Rankings that are nearly five times faster and scale effortlessly to huge volumes of text. It’s like giving your LLMs a turbo boost—making sure that the search for relevance isn’t just faster but smarter too. Curious how this might rewrite the rules for AI-powered search and retrieval? LEARN MORE

Google DeepMind researchers have developed BlockRank, a new method for ranking and retrieving information more efficiently in large language models (LLMs).

- BlockRank is detailed in a new research paper, Scalable In-Context Ranking with Generative Models.

- BlockRank is designed to solve a challenge called In-context Ranking (ICR), or the process of having a model read a query and multiple documents at once to decide which ones matter most.

- As far as we know, BlockRank is not being used by Google (e.g., Search, Gemini, AI Mode, AI Overviews) right now – but it could be used at some point in the future.

What BlockRank changes. ICR is expensive and slow. Models use a process called “attention,” where every word compares itself to every other word. Ranking hundreds of documents at once gets exponentially harder for LLMs.

How BlockRank works. BlockRank restructures how an LLM “pays attention” to text. Instead of every document attending to every other document, each one focuses only on itself and the shared instructions.

- The model’s query section has access to all the documents, allowing it to compare them and decide which one best answers the question.

- This transforms the model’s attention cost from quadratic (very slow) to linear (much faster) growth.

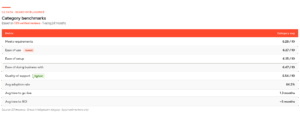

By the numbers. In experiments using Mistral-7B, Google’s team found that BlockRank:

- Ran 4.7× faster than standard fine-tuned models when ranking 100 documents.

- Scaled smoothly to 500 documents (about 100,000 tokens) in roughly one second.

- Matched or beat leading listwise rankers like RankZephyr and FIRST on benchmarks such as MSMARCO, Natural Questions (NQ), and BEIR.

Why we care. BlockRank could change how future AI-driven retrieval and ranking systems work to reward user intent, clarity, and relevance. That means (in theory) clear, focused content that aligns with why a person is searching (not just what they type) should increasingly win.

What’s next. Google/DeepMind researchers are continuing to redefine what it means to “rank” information in the age of generative AI. The future of search is advancing fast – and it’s fascinating to watch it evolve in real time.

Search Engine Land is owned by Semrush. We remain committed to providing high-quality coverage of marketing topics. Unless otherwise noted, this page’s content was written by either an employee or a paid contractor of Semrush Inc.

![Unlock Your Rivals' Secrets: The Ultimate SEO Competitor Analysis Guide [+ Free Template]](https://onlinecashshop.com/wp-content/uploads/2026/05/unlock-your-rivals-secrets-the-ultimate-seo-competitor-analysis-guide-free-template.jpg)

![Unlock Your Rivals' Secrets: The Ultimate SEO Competitor Analysis Guide [+ Free Template]](https://onlinecashshop.com/wp-content/uploads/2026/05/unlock-your-rivals-secrets-the-ultimate-seo-competitor-analysis-guide-free-template-300x126.jpg)