Inside Bing’s Secret Sauce: How Grounding Revolutionizes Search Beyond Indexing

Ever wondered how search engines will keep up when AI starts pulling answers instead of just tossing links? Well, Microsoft’s Bing team just dropped a fascinating framework that flips the script on traditional indexing. Instead of just figuring out which pages deserve your clicks, they’re now focused on what info an AI can responsibly lean on to craft its responses. Sounds simple, right? But—oh boy—it’s a wild ride through five critical areas where search and AI grounding diverge, from handling stale facts that could totally mislead, to knowing when it’s smarter for AI to just say, “I don’t know.” Plus, there’s this quirky bit about “abstention,” which is essentially AI’s polite way of bowing out when the facts aren’t convincing enough. After years in SEO trenches watching algorithms evolve, I gotta say: this pivot toward grounding AI answers is a game changer, shaking up how we think about indexing and content quality. Curious about the nitty-gritty behind this clever shift and what it means for publishers and marketers alike? You’re in for a treat. LEARN MORE

Microsoft’s Bing team published a framework describing how indexing requirements change when the goal is to ground AI answers rather than to rank search results.

The post identifies five measurement areas where the company says the two systems diverge. It also names “abstention” as a design choice for AI-powered retrieval.

What Microsoft Described

The post argues that traditional search indexing and grounding indexing share the same foundation but serve different goals.

Traditional search, the team writes, asks “which pages should a user visit?” The grounding layer asks “what information can an AI system responsibly use to construct a response?”

Microsoft identifies five categories where the measurement requirements differ.

On factual fidelity, the team notes that some ranking mismatch is tolerable in traditional search because a user can click through and evaluate. In grounding, the post describes breaking content into retrievable chunks as a process that “can distort page substance in ways that never appear in any ranking signal.”

For source attribution quality, the Bing team calls attribution helpful in traditional search but “a core signal” in grounding. Not all indexed content matters equally as evidence for an AI answer, the team adds.

On freshness, Microsoft notes a clear difference in cost. Stale content in search is a ranking problem. In grounding, the post says, “a stale fact produces a misleading response.”

For coverage of high-value facts, the post explains that a missed document in search is recoverable because alternative results exist. In grounding, the index must ensure “the specific facts and sources that people are likely to ask about are actually available and groundable.”

On contradictions, traditional search can surface one source above another and let the user decide. A grounding system can’t do that. “An AI system that silently arbitrates between contradictory sources is one that may confidently assert the wrong thing,” the team says.

Abstention And Iterative Retrieval

The post also covers two design differences between the systems.

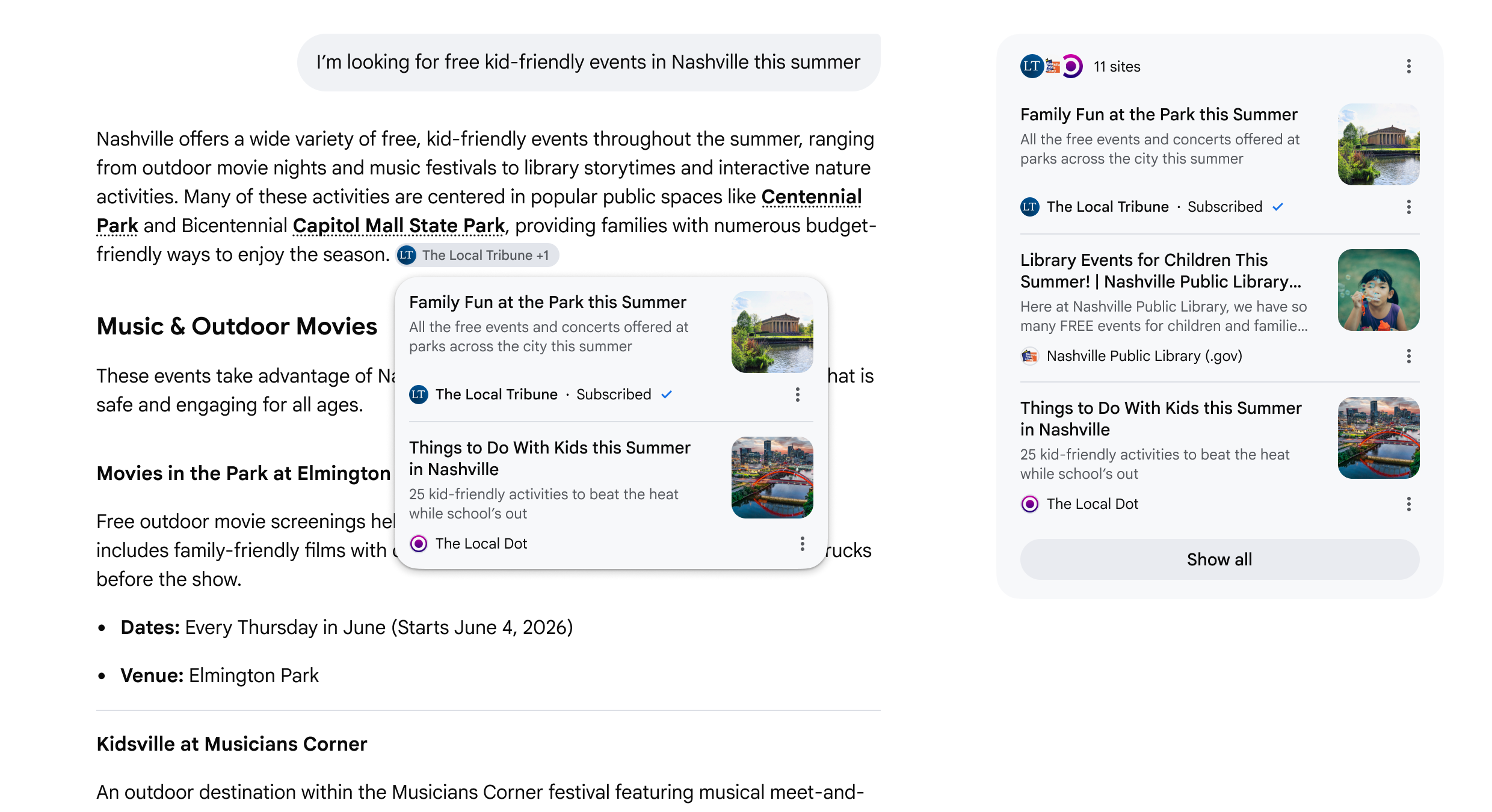

Microsoft calls declining to answer “abstention.” For a grounding system, that’s a valid outcome when support is missing, stale, or conflicting. Traditional search doesn’t need to make this judgment because it presents options for a human to evaluate.

Iterative retrieval is the other difference. Traditional search is typically a single interaction where a query goes in and ranked results come out. Grounding systems may need to ask follow-up questions, refine retrieval based on intermediate results, and combine evidence from multiple sources.

Errors in early retrieval steps “compound through subsequent reasoning steps in ways that no human reviewer would catch in real time,” the post adds.

Context

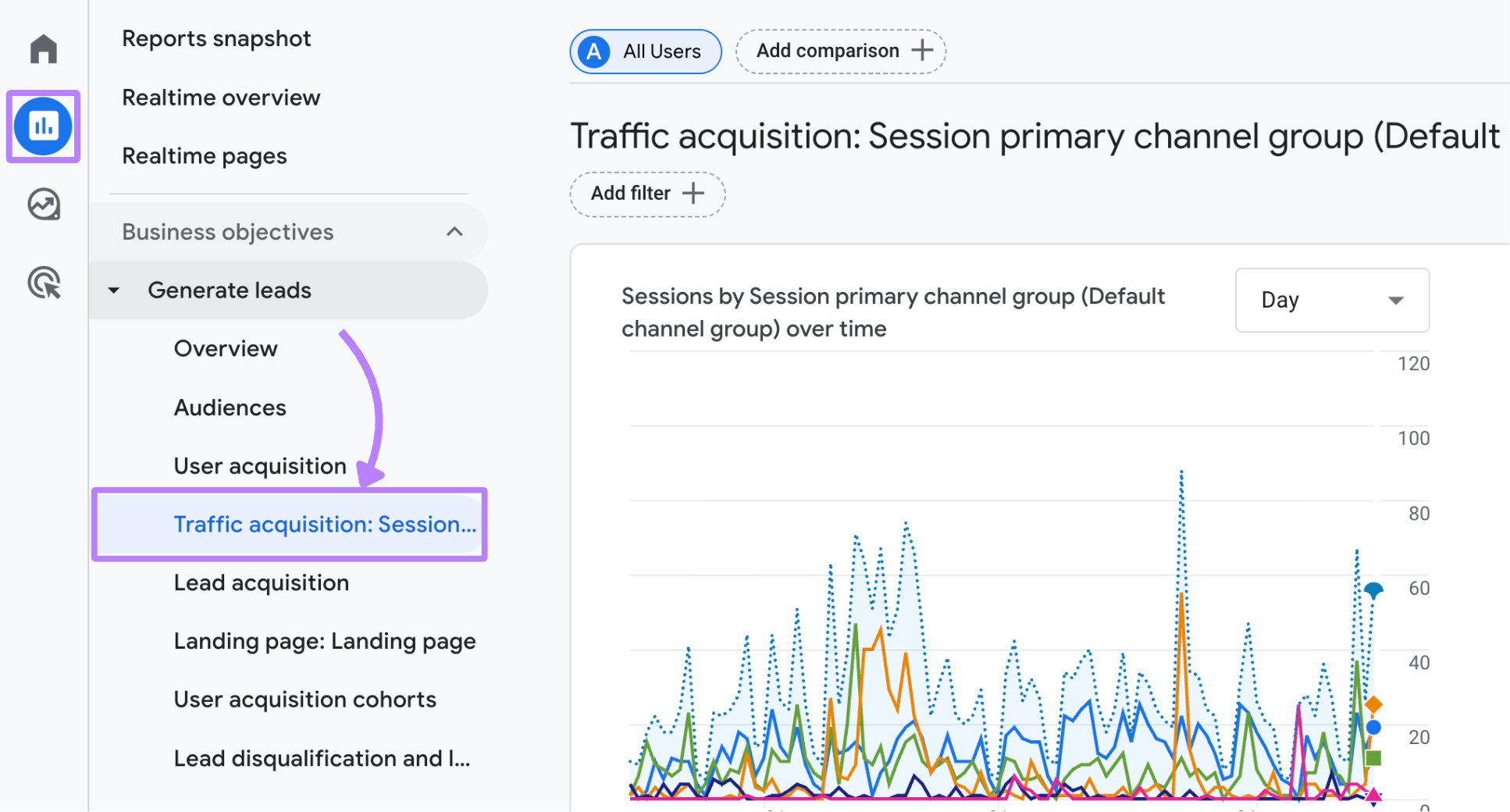

This blog post comes after a series of moves by Microsoft to build out its grounding tooling and give publishers visibility into it.

In February, Microsoft launched the AI Performance dashboard in Bing Webmaster Tools, giving sites their first page-level citation data for AI-generated answers. The company rewrote the Bing Webmaster Guidelines in March to include GEO as a named optimization category and added grounding query-to-page mapping to the dashboard the same month. At SEO Week in April, Madhavan previewed four additional features for the dashboard, including Citation Share and grounding query intent labels.

This post is more conceptual than those prior announcements. It doesn’t introduce new tools or features. Instead, it lays out the engineering principles the company describes as guiding its index evolution.

Why This Matters

This framework clarifies what Microsoft says its systems need from the index for AI answers.

Microsoft states grounding relies on the same crawling, quality, and web understanding as search, but grounded answers require accurate, fresh, attributable, and consistent evidence. Stale facts, weak sources, and contradictions pose risks when content is used for answers.

Looking Ahead

The post offers insight into why some content is easier for AI to cite. If the Citation Share and intent-label features previewed at SEO Week ship, they could help test whether the measurement priorities described here show up in actual publisher data.

Featured Image: TY Lim/Shutterstock

Post Comment