Unlock the Hidden Secrets of SaaS AI Search Optimization with This 8-Step Playbook

Ever wondered how some SaaS brands get all the AI search love while others just scramble to catch a hint of visibility? In today’s digital bazaar, buyers don’t just punch in simple keywords anymore—they toss entire questions into AI search, digging into pricing tiers, integrations, compliance, and the whole shebang before they even visit a single website. If your SaaS offering isn’t popping up—or worse, is misrepresented—you’re invisible right at the critical moment when buyers’ interest is piqued. This isn’t just another SEO guide; it’s an eight-step playbook crafted to help SaaS teams fine-tune their product signals so AI systems not only notice but accurately interpret and cite your brand. Plus, there’s a savvy method here for tracking those all-important citations and measuring the real business impact over time. You ready to reshape your SaaS visibility and outsmart the AI algorithms steering buyer decisions? Let’s dive in. LEARN MORE.

When buyers need to find new software today, they often start in AI search, asking full questions about pricing tiers, integrations, compliance, and use cases. AI tools summarize and compare options before buyers ever reach a website.

If your SaaS brand isn’t mentioned (or is mentioned inaccurately), you lose early visibility at the start of the buying journey.

This guide shows how SaaS teams can strengthen the signals AI systems use to interpret, summarize, and cite their product.

You’ll get an eight-step workflow you can apply across product, pricing, documentation, and comparison pages, plus a method for monitoring citations and measuring impact over time.

Quickstart guide to AI search optimization for SaaS

Getting visible in AI search results requires a different approach than traditional SaaS SEO.

You’re not only competing for rankings. You’re competing for how accurately AI systems summarize, compare, and cite your product in buyer-facing answers.

How does AI search change SaaS visibility?

AI search shifts the goal from ranking for keywords to publishing product information that AI can interpret and reuse. SaaS buyers rarely ask single-intent queries. They ask about pricing tiers, team size, integrations, and compliance, often in one prompt. AI systems pull details from multiple sources and generate a shortlist before the buyer clicks anything.

For SaaS teams, that means structuring product, pricing, documentation, and comparison pages so AI can extract them cleanly.

8 essentials for SaaS AI visibility

Before working through the playbook, here are the eight signals that move SaaS brands into AI answers:

- Consistent product and feature naming across all pages

- Clean, scoped URL structure that’s easy for crawlers to follow

- FAQ schema on help and feature pages

- SoftwareApplication schema with current pricing on product pages

- Glossary and comparison pages built with HTML tables (not images)

- Conversation-led page structure that answers full multi-part prompts

- Off-site expert quotes anchored to data and frameworks

- Monthly citation monitoring tied to a simple ROI model

Each is covered in detail in the eight-step playbook below.

The 8-step SaaS AI search playbook

Let’s break each step down with clear actions, examples, and workflows you can apply directly to your SaaS pages.

1. Audit current AI citations

Before you optimize, you need to know how often—and how accurately—AI engines are already mentioning your SaaS brand. This baseline shows whether you’re invisible, misrepresented, or already gaining traction.

In practice, AI answer engines have an easier time summarizing categories with abundant, consistent public documentation and third-party coverage. Mature SaaS categories often have more review sites, comparisons, implementation guides, and analyst-style content, so those businesses tend to show up more reliably in AI-generated summaries than brands in emerging or niche segments.

How to audit your current AI citations

Start by testing how major AI engines talk about your category.

Run 8-12 realistic prompts your buyers would use, such as:

- “What are the best [your category] tools for startups?”

- “Compare [your brand] vs. [competitor].”

- “Which [category] software integrates with Slack?”

Then check the results in core platforms like ChatGPT, Perplexity, and Google AI Overviews.

Log the following for each response:

- Whether your brand is mentioned at all

- Where it appears in the answer (first, second, or later)

- How accurate the details are (correct, outdated, or wrong)

- Whether the answer includes clickable source links

Then benchmark that visibility against the broader landscape.

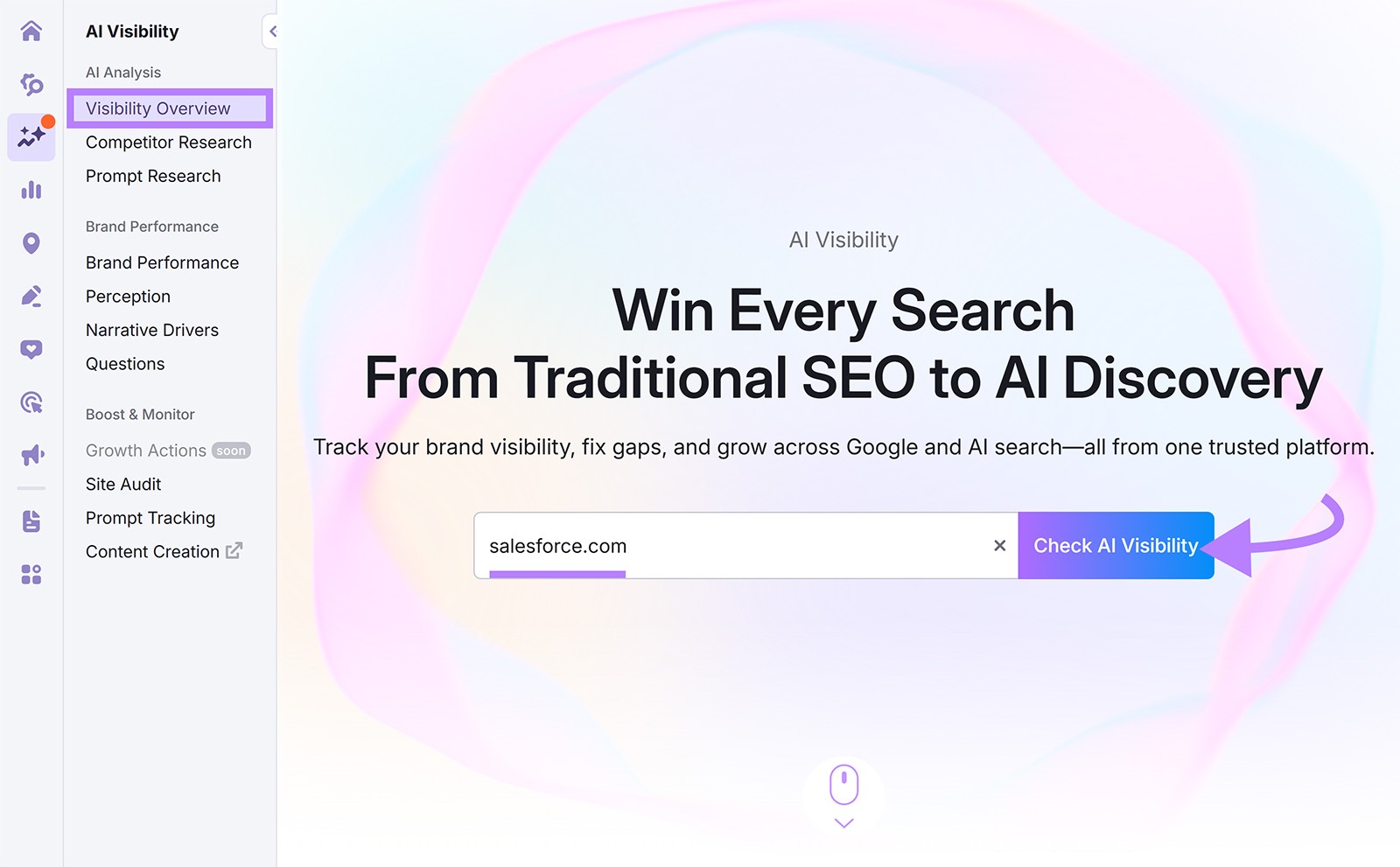

Semrush’s AI Visibility Toolkit draws on a database of 239M+ prompts across ChatGPT, Gemini, Google AI Overviews, and AI Mode, giving you a comparison set bigger than anything you can manually test.

Enter your domain in the Visibility Overview, then filter the Topic Opportunities tab to show prompts where competitors are mentioned but you aren’t.

Then enter your domain and three to five direct competitors in Competitor Research to see which content earns citations, and which queries trigger them.

Export the data and combine it with your manual prompt log for a baseline.

What to expect

After your audit, you should have a snapshot that highlights:

- Average citations per week

- Accuracy of brand mentions (correct vs. outdated)

- Share of voice in AI citations compared to competitors

Timebox: About 30-45 minutes for a full baseline check.

2. Strengthen product and documentation structure for AI crawling

AI engines pull from pages that are easy to interpret, with clear structure, consistent naming, and up-to-date product information.

Strengthening your product and documentation pages gives AI systems clearer signals to work with before you touch schema or do any content rewrites.

How to strengthen product and documentation structure

Start with the core areas AI parsers rely on most:

- Use consistent product and feature names across your site: Call the same feature by the same name on product pages, comparison pages, docs, and FAQs. This helps AI systems (and humans) recognize it as one entity instead of many similar-but-different concepts.

- Clarify your URL structure: Clean, scoped URLs make it easier for crawlers to understand which pages cover which parts of your product. Use predictable, descriptive paths for pricing, features, integrations, and documentation.

- Cross-link related assets: This creates a crawlable path that shows how your product, docs, and support content connect. From a feature page, link directly to:

- The relevant documentation article

- Any comparison page where that feature matters

- Related FAQs

- Keep product data current in one source of truth: This reduces the chance that AI systems (or buyers) will see different versions of the same information. Centralize pricing, plan names, feature lists, and integration details in one internal source, then:

- Update product pages first

- Sync documentation, comparison pages, and FAQs against that source

A clear structure removes ambiguity and helps AI engines extract the correct details, especially for SaaS categories with overlapping terminology.

Optional: experiment with an “llms.txt” file

You can test an llms.txt file as a light experiment, not a core requirement. The format isn’t a formal standard, and there’s no confirmed evidence that AI crawlers consistently use it today.

Some teams are experimenting with the file to see if it’ll help AI parsers find authoritative pages faster. But as of now, there’s no proven correlation between using llms.txt and higher AI citation volume.

If you want to try it, keep it simple:

- Include only your most accurate, up-to-date product, pricing, documentation, and comparison pages

- Keep the file small and curated (a short list, not a second sitemap)

- Treat it as a supplementary hint, not your primary AI visibility strategy

To prioritize which URLs to refine and include:

In Semrush’s Site Audit, find high-traffic pages that:

- Lack structured data

- Sit outside your main sitemap

- Contain outdated product information

Then use On Page SEO Checker to review metadata consistency (titles, descriptions, H1s, and internal links) before and after you clean up structure.

What to expect

After tightening product and documentation structure, you should see:

- Clearer crawl paths between product pages, docs, FAQs, and comparisons

- Fewer conflicting versions of core details like pricing, plan names, and key features

- Stronger foundations for later steps like FAQ schema, SoftwareApplication schema, and comparison content

- If you test llms.txt, a small, curated list that’s easy to maintain and aligns with your most important SaaS pages

Timebox: About one hour for an initial pass on core product, pricing, and documentation URLs (plus extra time if you test llms.txt).

3. Add FAQ schema to help and feature pages

AI engines rely on clear, structured answers when assembling responses.

FAQ content is naturally formatted as concise, self-contained answer blocks, which reduces the chance of your product details being paraphrased incorrectly.

FAQ schema reinforces that structure for crawlers and helps keep answers consistent across search surfaces.

How to add FAQ schema effectively

Start with real questions from customers, support tickets, or sales calls, not generic FAQs. They should reflect how users ask questions:

- Keep each answer short, factual, and self-contained

- Use present-tense language

- Include version numbers or “as of” dates when relevant

- Remove marketing fluff

For example:

Q: Does your CRM integrate with Slack?

A: Yes. Our CRM includes a native Slack integration that posts updates and reminders in real time.

Once you’ve drafted your FAQs, convert them into clean JSON-LD.

For example:

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [{

"@type": "Question",

"name": "Does your CRM integrate with Slack?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Yes. Our CRM includes a native Slack integration that posts updates and reminders in real time."

}

}]

}

Keeping your schema small and consistent makes it easier for AI systems to interpret and extract.

To identify the right FAQ topics and validate your markup:

- In Keyword Magic Tool, use the Questions filter to surface phrasing patterns your users already use

- Select the top 10-15 recurring questions and map them to your help docs or feature pages

- Use On Page SEO Checker to validate your JSON-LD placement and check for any markup errors

- After publishing, run a quick Site Audit to confirm crawlers are detecting the FAQ markup on the page

What to expect

Clean FAQ schema on help and feature pages can lead to:

- More FAQ entities indexed

- More pulls from these fields in AI-generated answers

- Fewer inconsistencies across platforms when describing your product

Timebox: Two to three hours to research, draft, implement, and validate.

4. Build glossary and comparison pages

AI engines prioritize precise, high-confidence sources. So glossary and comparison content often become the reference set AI models use when summarizing a SaaS category.

Clear definitions and structured comparison data can increase your chances of being cited in conversational answers.

How to build glossary and comparison content that AI trusts

Start with glossary pages. Use a simple, repeatable structure so AI systems can extract meaning consistently:

- Definition: One sentence in plain language

- How it works: A short, concrete explanation

- Why it matters: A practical benefit or use case

- Related terms: Two or three cross-links

For example, here’s how our listing for the term “canonical URL” shows up in our SEO Glossary:

For SaaS glossaries, include terms buyers evaluate during software selection. Useful entries often include:

- API rate limits: How request caps work and why they matter for integration-heavy workflows

- SOC 2 compliance: What the framework covers and what it signals about a vendor’s security posture

- User provisioning: How automated onboarding works and why it reduces admin overhead

Next, build comparison pages that answer “What’s the difference between X and Y?” in a structured, extractable format:

- Use HTML tables, not images, for features and pricing

- Add “as of” dates to pricing

- Include “Best for…” summaries tied to real SaaS use cases

- End with a clear recommendation mapped to constraints (budget, compliance, integrations)

AI systems may restate comparison tables without context or blend your data with other sources. Add “as of” dates to pricing and limits, separate objective facts from positioning language, and re-check your top comparison prompts monthly (“[Brand] vs [Competitor]”) to catch misquotes early. If you find errors, update the source page first, then use platform feedback tools.

For SaaS, include tier constraints directly in the table (SSO availability, API limits, user provisioning, audit logs) because buyers and AI systems treat those as decision-critical differentiators.

To identify which terms and comparisons to prioritize, start with competitive research.

Use Semrush’s Keyword Gap tool, filtered to the Missing tab, to find competitor glossary and comparison topics you don’t rank for at all.

Use Topic Research to generate question clusters and related themes for your glossary or comparison set.

In Site Audit, filter for your existing glossary/comparison URLs and refresh outdated images, pricing, or definitions.

What to expect

By the end of this step, you should have:

- A starter glossary list (10-20 terms) with a consistent structure across entries

- At least one comparison page template that uses HTML tables for pricing and features

- A refresh checklist for keeping definitions, limits, and pricing current (“as of” dates, plan changes, renamed features)

Timebox: One to two days for the initial set.

5. Optimize for conversation-led queries

AI engines don’t look for keywords. They look for context.

Modern SaaS buyers phrase questions as full scenarios, like ‘best CRM for 50-person remote teams,” instead of short phrases like “CRM software.”

Structuring your content around these multi-part prompts helps AI interpret it correctly and cite it in complex answers.

How to optimize for conversation-led queries

Start by mapping the query fan-out: the sub-questions AI engines create when analyzing a complex prompt.

These usually include:

- Scenario: Who’s asking or in what situation

- Constraints: Budget, team size, or tech stack

- Integrations: Tools it must connect with

- Timelines: Implementation or setup expectations

- Security/compliance: Enterprise-readiness signals

SaaS prompts often split into two paths: product-led evaluation (trial, onboarding time, team adoption) and procurement evaluation (security, SSO, contracts, data residency). Structure pages so both paths are explicitly answerable.

Use Semrush’s Keyword Magic Tool with the Questions filter to surface the natural-language phrasing buyers actually use: “best CRM for remote teams,” “CRM with Slack alerts,” “CRM under $50/user.”

Rewrite your pages so they answer these fan-out questions directly. For example:

Prompt context: A buyer searching for the “best CRM for a 40-person agency that needs HubSpot migration, Slack alerts, SOC 2, and a plan under $80/user.”

Keyword-first content (before): “CRM tools help teams manage pipelines. Many CRMs offer integrations and reporting.”

Conversation-led content (after): “For a 40-person agency under $80/user that needs Slack alerts and HubSpot migration, Tool A is a strong fit. Tool A supports SOC 2, includes native Slack notifications, and offers HubSpot import with guided setup. Teams that require SSO on the base plan may prefer Tool B, which includes SAML earlier but has higher per-seat pricing.”

When you rewrite pages for these prompts, add explicit sections for limits and constraints (plan caps, API limits, SSO availability by tier, onboarding time, required admin effort). Those are the details AI systems tend to compress, and the details most likely to get misstated if your page is vague.

Structure your content so each section mirrors this flow:

- Lead with the answer: State your recommendation or takeaway up front

- Add evidence: Data, examples, or customer proof that backs it up

- Close with a next step: Simple action or setup instruction

What to expect

Optimized pages surface in more AI answers, with clearer placement and stronger engagement.

You’ll likely see:

- Higher citation positions in complex, multi-facet AI answers

- Increased reader scroll depth and engagement

- Noticeable uplift in featured-answer extractions

Timebox: About two to three days to retrofit your top three pages.

6. Implement SoftwareApplication schema on product and pricing pages

AI engines depend on structured data to understand what your product is and how it works.

SoftwareApplication schema helps you publish consistent details about your category, pricing, platform, and features, giving your SaaS pages the clear, machine-readable context needed for accurate citations and rich results.

Google hasn’t confirmed that SoftwareApplication schema directly influences AI Overviews. But it’s still a practical way to reduce ambiguity in how your product is represented across search systems.

How to add and maintain SoftwareApplication schema

Add a concise JSON-LD SoftwareApplication schema block to your main product and pricing pages. Focus on essential fields:

- name, applicationCategory, operatingSystem

- offers (price, currency, billing frequency)

- featureList (three to five core capabilities)

If you have monthly vs. annual pricing or tiered packaging, reflect billing frequency and “starting at” language consistently across UI and structured fields to reduce pricing confusion in summaries.

Keep these fields current—especially pricing and version numbers—to avoid outdated information circulating through AI summaries.

Here’s an example snippet (customize it for your product):

{

"@context": "https://schema.org",

"@type": "SoftwareApplication",

"name": "Your SaaS Name",

"applicationCategory": "BusinessApplication",

"operatingSystem": "Web-based",

"offers": {

"@type": "Offer",

"price": "29",

"priceCurrency": "USD"

},

"featureList": ["Team collaboration", "Project tracking", "Time logging"]

}

But SaaS pricing and features change often, and that’s where schema errors typically creep in.

To reduce that risk:

- Add “priceValidUntil” or “priceValidFrom” to signal freshness

- Update schema whenever pricing or packaging changes

- Avoid listing every feature; only include capabilities that rarely change

- Keep Offer/Product schema consistent across URLs to prevent conflicts

Use Semrush’s Site Audit to check schema coverage and detect missing or incorrect markup.

Then use Log File Analyzer (available with the SEO Toolkit) to confirm bots are reaching your product and pricing URLs consistently.

Re-run audits monthly to make sure version numbers and pricing fields stay accurate.

What to expect

After implementation, you should have a consistent structured-data layer that:

- Reduces ambiguity around product category, pricing fields, and core features

- Lowers the risk of stale pricing/packaging details being copied across your site

- Improves eligibility for rich results in traditional search

Timebox: About two to four hours for setup and validation.

7. Create an expert quote database

AI engines give weight to trusted voices. They often cite experts, not just brands.

Building a small, reusable library of expert insights helps your content and founders get referenced in articles, interviews, and AI-generated summaries.

How to build a reusable quote library

Start with a lightweight quote library you can expand over time.

For established teams, that may mean collecting 20-30 short, quotable insights from subject-matter experts, founders, or data leads. For early-stage SaaS, even five to ten quotes are enough to start appearing as a credible source.

Each quote should:

- Include a data point or framework (e.g., “According to our 2025 benchmark”)

- Be time-stamped and tied to a specific context (e.g., “Q3 2025, post feature launch”)

- Stay within one or two sentences so it’s easy to cite

If you don’t have formal research or published studies yet, you can repurpose:

- LinkedIn posts from founders

- Product update announcements

- Onboarding or support insights (“Most teams adopt/accomplish X within their first week…”)

- Internal metrics that you’re comfortable making public

Store everything in a shared spreadsheet or database with fields like “topic,” “quote,” “speaker,” “date,” “source URL,” and “status (active/retired).” This lets team members across the organization grab consistent, on-brand quotes for various assets.

Use the quote library as a source for PR responses, partner co-marketing, founder content, and product announcements. Consistent reuse across external domains increases the odds that AI systems encounter and reuse your expert statements.

Review the log monthly to retire outdated stats, refresh quotes tied to old pricing or product names, and identify new topics worth adding to your quote library.

This gives search engines and AI tools more structured, quotable material to work with and helps your brand build topical authority even before you have a large content footprint.

What to expect

Once your quote database is in regular use, you’re more likely to see:

- More consistent mentions in blogs, media, and partner content

- Wider domain diversity in off-site citations

- Faster turnaround on PR and thought-leadership opportunities

Timebox: About one week to compile and publish your initial set.

8. Monitor AI search mentions and measure ROI

AI engines evolve quickly. What’s accurate this month may be outdated next month. The stakes for staying current are high: Semrush’s research shows the average AI search visitor is worth roughly 4.4x more in conversion value than a traditional organic search visitor.

Consistent monitoring lets you spot new citations, detect errors, and correct misinformation before it spreads. Pair that visibility tracking with a lightweight ROI model so you can connect AI mentions to pipeline impact over time.

How to set up a weekly and monthly tracking routine

Start with a weekly check-in that covers AI outputs and accuracy.

Test five to eight high-intent prompts across ChatGPT, Perplexity, and Google AI Overviews. Focus on:

- Your main product queries

- Category-level prompts

- Key comparison prompts (for example, “[your brand] vs. [competitor]”)

For every prompt, log:

- Whether your brand is mentioned

- Where it appears in the answer (first, second, or later)

- Whether pricing, features, and integrations are correct, outdated, or missing

- Whether a clickable source link is included

Screenshot meaningful changes over time. Save examples where your brand appears or disappears, where a competitor replaces you in a recommendation slot, or where details like pricing or security claims shift.

SEO strategist Ankush Gupta shared an example where Google Search Console impressions increased while click-through rate (CTR) dropped, even though rankings stayed stable. That pattern may indicate visibility shifting from clickable results to AI-generated answers. Users are seeing citations and summaries without visiting the site. For SaaS, that creates an attribution gap unless you track mentions, accuracy, and assisted conversions over time.

Fix issues at the source, then flag them in the tools:

- Update pricing pages, documentation, FAQs, and schema first

- Then use each platform’s feedback tools to report inaccuracies:

- ChatGPT and Perplexity: Use the “Report” or “Thumbs down” option on the response

- Google AI Overviews: Use the “Feedback” link on the overview panel

These controls don’t guarantee a fast update, but they’re the expected way to signal errors. To learn more about how AI systems choose and rotate citations, see our guide on AI citations.

Next, add a simple monthly ROI layer so visibility doesn’t become a vanity metric.

How to build a monthly AI citation ROI model

Start by attributing visits and conversions that originate from AI surfaces like ChatGPT, Perplexity, or Google AI Overviews.

- Use UTM parameters or referral tags when AI platforms provide clickable links, and track assisted conversions to account for zero-click visibility

- Track “visit > lead > conversion” in GA4 or your CRM

- Log the number of citations your brand receives during the same period

- Record monthly costs for tools, content creation, and monitoring

Then calculate ROI:

ROI = (AI revenue – AI costs) / AI costs x 100

For example, if AI-linked pages bring in 50 visits, five leads, and one closed deal worth $1,200, and your monthly AI effort costs $400:

- ROI: (1,200 – 400) / 400 x 100 = 200%

- Value per citation: If those 50 visits came from 30 citations: 1,200 / 30 = $40 per citation

This gives you a directional sense of business impact, which is important because many AI results are zero-click. Treat AI-driven attribution as trend data, not an exact measurement.

To keep this operational, combine three inputs in one Looker Studio view:

- AI citation logs (count + accuracy)

- GA4 traffic from AI-referred sources when available

- CRM data (lead > pipeline > revenue)

Seeing citations and revenue together prevents “visibility reporting” from drifting into vanity metrics.

How to connect this to Semrush

In Semrush’s AI Visibility Toolkit:

- Set up a custom Position Tracking project to monitor a specific list of high-value prompts daily across ChatGPT, Gemini, AI Overviews, and AI Mode, not just keywords

- Track share of voice shifts for your SaaS category over time

- Export a monthly summary showing mentions, accuracy, and citation trends to compare against GA4/CRM outcomes

What to expect

By the end of this step, you should have:

- A weekly log of AI mentions, ranking position, and accuracy by prompt

- A repeatable monthly ROI calculation tied to revenue and costs

- A simple dashboard view that shows whether AI visibility is translating into pipeline movement

Timebox: 15-30 minutes per week, plus about one hour per month for ROI updates.

Common pitfalls in SaaS AI search optimization

Even teams that follow the playbook closely run into the same handful of issues. Watch for these six.

Optimizing for branded queries only

Branded prompts (“What is [your brand]?”) give an inflated read on visibility because your brand is already in the question, AI engines will mention you regardless. Test category-level prompts (“What’s the best [category] for [scenario]?”) to see whether you actually surface when buyers don’t know your name yet.

Letting schema lag behind UI changes

Pricing, plan names, and feature lists shift faster than most teams update their structured data. AI models extract whatever the schema says, so stale fields spread outdated information across summaries. Re-audit SoftwareApplication and FAQ schema whenever pricing, packaging, or core features change.

Treating llms.txt as a primary strategy

The llms.txt format isn’t a confirmed ranking signal, and there’s no proven correlation between using it and higher AI citation volume. Some teams test it as a supplementary hint, but it shouldn’t replace schema, FAQ structure, or comparison content as core AI visibility work.

Using platform feedback tools without fixing the source

Reporting an inaccurate ChatGPT response or thumbs-downing a Perplexity answer doesn’t update your underlying pages. Always update the source page first—pricing, documentation, FAQs, schema—then use platform feedback as a secondary signal. AI systems re-crawl periodically, and the source change does the actual work.

Image-based comparison tables

Tables saved as screenshots or infographics are invisible to AI extraction. The AI parses HTML; if your comparison data lives in a JPEG, it doesn’t exist for citation purposes. Use HTML tables for any comparison content you want cited: features, pricing, tier constraints, integration support.

Generic thought-leadership quotes without data anchors

Quotes that read like marketing taglines don’t get cited. AI engines prefer expert statements with a number, study, or repeatable framework attached (“Based on our 2026 SaaS pricing benchmark…” rather than “We believe in customer success”). Anchor every reusable quote to a specific data point or context.

What’s next for SaaS AI search

AI engines are moving toward fewer clicks and higher precision. For SaaS, that means AI systems will get better at summarizing the details buyers actually evaluate: plan limits, pricing tiers, integration depth, and security posture.

The advantage will shift to teams that maintain a single source of truth for product facts and keep those facts consistent across product pages, docs, FAQs, and comparison content. Freshness and consistency will matter more than publishing volume, because AI systems can’t summarize what they can’t reliably interpret.

Over time, expect AI answers to get more precise about the details that drive SaaS decisions: plan limits, SSO availability by tier, audit logs, data residency, API caps, and integration depth. Teams that make those facts easy to extract—and easy to keep current—will show up more often and get misquoted less.

FAQs about SaaS AI search optimization

Do I need an “llms.txt” file for AI visibility?

No, llms.txt isn’t a required standard for AI visibility. Treat it as an optional curation file that points to your most accurate, citation-ready pages (product, pricing, docs, and key comparisons).

Which schema markup works best for SaaS products?

For SaaS products, start with SoftwareApplication and FAQ schema. Use HowTo markup for setup or onboarding guides to increase extraction potential in AI summaries.

How can I track traffic that comes from AI platforms?

To track traffic that comes from AI platforms, use UTM-tagged links on platforms that support clickable citations, and rely on assisted-conversion rules in your analytics to capture zero-click AI visibility.

How often should SaaS product content be updated for AI search?

Run a quarterly audit of your SaaS features, pricing, and documentation to maintain AI visibility and accuracy in AI-generated search results. Update immediately after any changes to pricing, packaging, or security.

What should I do if my SaaS product never appears in AI answers?

If your SaaS product isn’t appearing in AI answers, strengthen your structure and authority with steps two through six of this playbook (product documentation, FAQ schema, glossary and comparison pages, conversational optimization, and SoftwareApplication schema). Then add off-site expert quotes and re-audit your visibility after 30 days.

Post Comment