Unlock the Secret KPIs in Generative Engine Optimization That Top Marketing Teams Swear By

Ever wondered how generative AI is quietly rewriting the very rules of brand discovery? It’s not just about keywords anymore—AI-driven platforms are reshaping the buyer journey in ways that traditional SEO metrics simply can’t capture. With Google AI Overviews popping up in over 20% of searches, executives are asking the hard questions: Are we making the cut in AI-generated answers? Or worse, is AI handing prospects over to our competitors on a silver platter? The catch? All those classic SEO KPIs you’ve relied on are starting to look like yesterday’s news. Enter GEO KPIs—a fresh set of metrics designed to decode your brand’s true performance inside these smart engines. If you think showing up on page one is the peak of visibility, think again. This isn’t just a game of rankings anymore; it’s about being recognized and recommended by AI itself—often before a single website visit occurs. Intrigued? Let’s dive into why GEO KPIs matter, what to track, and how to link AI visibility to actual business wins using tools seasoned marketing teams swear by, like HubSpot AEO. Ready to outsmart the AI algorithms and claim your spot in tomorrow’s search landscape? LEARN MORE.

Generative AI is changing how people discover brands, products, and information. Because it disrupts the buyer journey, it requires new metrics, specifically GEO KPIs, that accurately reflect performance within these AI engines.

With Google AI Overviews appearing in over 20% of searches, marketing leaders are now being asked new questions by executives: Are we showing up in AI answers? Are we being cited? Or are AI engines recommending our competitors?

As search behavior shifts, traditional SEO KPIs alone can no longer explain visibility or downstream revenue impact.

This guide breaks down the GEO KPIs that actually matter, how to measure GEO success, and how to connect AI visibility to business outcomes using tools that marketing teams already trust, including HubSpot AEO.

Why GEO KPIs Matter Now

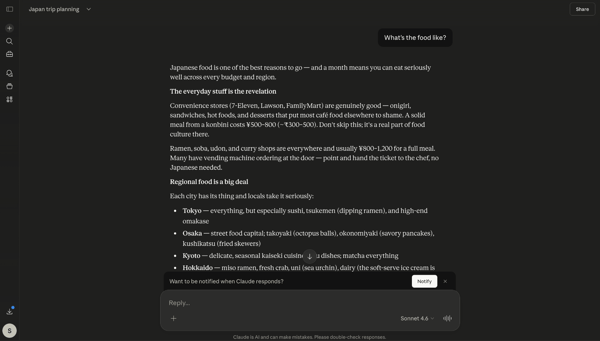

As generative AI becomes a primary decision layer in the buyer journey, generative engine optimization (GEO) KPIs become important performance indicators. According to OpenAI, nearly half of all ChatGPT usage falls into the “Asking” category, where users rely on AI for advice, evaluation, and guidance rather than simple task execution.

For many users — 61% of them — these “asks” are product recommendations. This means brand preference is influenced by AI-generated answers, often before a prospect visits a website.

Traditional marketing KPIs don’t capture this layer of visibility. Without understanding where and how often a brand appears in AI answers, it can be challenging to create a strategy to regain or maintain that influence.

From my experience, maintaining visibility inside AI-answers engines is fragile without a deliberate GEO strategy. After a targeted content update on my own site, I saw my content begin surfacing ahead of long-established industry publishers in AI-generated answers within 96 hours — without any corresponding jump in traditional search rankings.

If I had been tracking SEO metrics alone, I would have missed that change entirely. GEO KPIs exist to pinpoint these shifts before they translate into lost authority or, worse, downstream revenue impact.

Generative Engine Optimization KPIs to Track

The metrics below reflect how AI search behaves in the real world and give teams a clearer, more honest way to evaluate how their brands appear in AI-generated answers. Key metrics for measuring GEO success include AI citation frequency, answer inclusion rate, entity authority signals, AI referral traffic, AI share of voice, and AI-driven leads.

To understand which GEO KPIs and metrics actually hold up, I spoke with Kristina Frunze, founder of WebView SEO, in a recorded interview for the Found in AI podcast.

1. AI Citation Frequency

AI citation frequency tracks how often a brand is named directly in AI-generated answers across large language models (LLMs). Direct brand mentions are the most reliable signal that an AI engine recognizes and recalls a brand.

What the Experts Say: Frunze told me, “For the purpose of AI citations, at the moment, direct brand mentions are the best way to track it. The tools are evolving, and they’re not 100% accurate, but this is what we can rely on right now.”

How I use the metric: I use citation frequency as a baseline trust signal. If a brand isn’t being named at all, no amount of traffic or conversion optimization matters yet. But since I have a sense of where a brand should appear, I can track changes over time.

For a brand that already appears inside AI answers, I track changes in citations after content updates to see whether AI engines recognize the brand as a legitimate source or cite it more often.

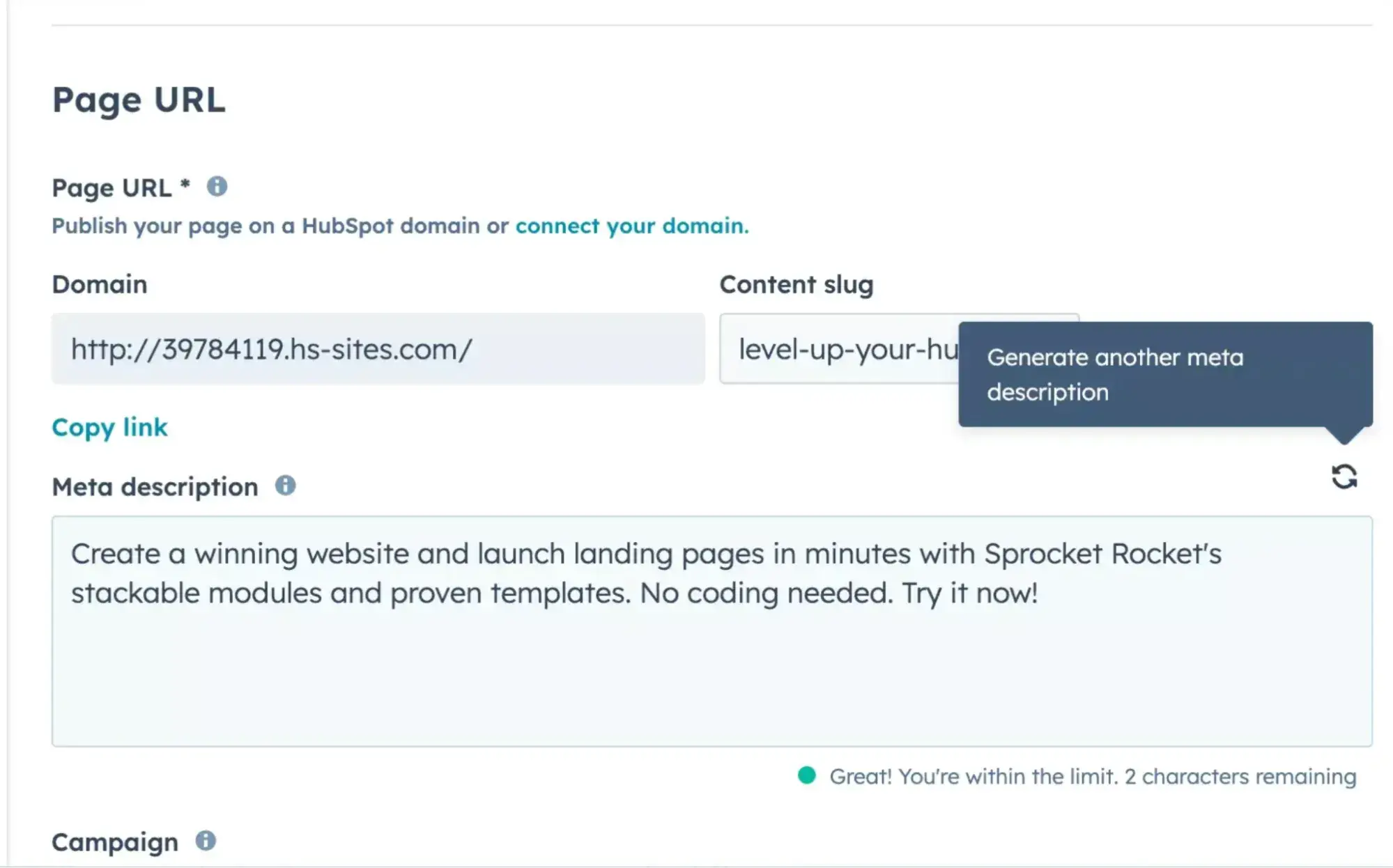

How to track: Monitor direct mentions of a brand in AI-generated answers using tools like HubSpot AEO, XFunnel, Addlly AI, or Superlines. Track changes over time after content updates to see whether AI models increasingly recognize and cite the brand.

Pro tip: Use HubSpot SEO Marketing Software to align cited pages with topic clusters and internal linking. A strong topical structure increases the likelihood that AI systems will consistently associate your brand with specific subjects.

2. AI Answer Inclusion Rate

AI answer inclusion rate measures how often a brand appears anywhere in an AI-generated response, even when no direct citation or link is provided. This generative engine optimization metric captures presence and relevance, not attribution alone.

What the Experts Say: Frunze explained, “If you just look at your AI citations, you’re missing the bigger picture.” She explained that metrics, like AI answer inclusion rate, help brands understand “what their competitors are doing and how they stand against them in LLM search.”

How I use the metric: I use the inclusion rate to assess whether AI models consider a brand part of the conversation. Inclusion without citation often indicates early-stage authority, which can later translate into citations as content clarity improves.

How to track: Capture all instances where the brand appears in AI responses, whether or not it’s cited, using multi-platform monitoring tools. Compare inclusion trends over time and across competitors to understand early-stage visibility and relevance.

Pro Tip: HubSpot AEO‘s Brand Visibility Dashboard tracks how often your brand appears in AI-generated answers, including instances where the brand is present but not directly cited. Track inclusion trends alongside assisted conversions in HubSpot analytics to understand how early-stage AI presence is influencing downstream pipeline activity.

3. Entity Authority Signals

Entity authority signals measure how consistently AI engines associate a brand with specific topics, attributes, and use cases. These associations are reflected in underlying knowledge graphs and reinforced through:

- Structured data

- Third-party mentions

- Consistent brand positioning across the web

What the Experts Say: “With AI SEO, links don’t matter as long as your brand is actually mentioned on communities, third-party websites, and directories,” Frunze said. “Getting your brand spoken about and getting it right is very important.”

How I use the metric: I treat entity authority as an off-site credibility layer. When I conduct AI visibility audits, I note where a brand is mentioned, whether the information is accurate, and whether AI-generated descriptions align with how the company positions itself.

This means I spend significant time measuring social KPIs and monitoring how users discuss a brand. One-off mentions on platforms like Reddit and Quora can appear in AI-generated answers, but it is important to understand where those comments come from and how they impact a brand’s perception.

How to track: Audit structured data, third-party mentions, and consistent brand positioning across web sources using social listening and entity-tracking tools. Measure how often AI associates the brand with specific topics, attributes, and use cases.

Pro tip: Use HubSpot’s Social Inbox to monitor brand mentions, conversations, and sentiment across social platforms in one place — and pair it with HubSpot AEO‘s Sentiment Analysis to see how those external signals are influencing how AI engines actually describe your brand. Keeping a close eye on where and how a brand is talked about helps reinforce consistent entity signals across the web.

4. AI Referral Traffic

AI referral traffic tracks sessions originating from AI platforms and passes referral data into analytics and CRM systems. While under-reported, this metric provides directional insight into how AI visibility translates into site engagement.

What the Experts Say: Frunze told me, “AI traffic is the easiest to track because it feels familiar, but there’s a lot of uncertainty because not all elements pass the proper parameters. You’re not always getting the full picture.”

How I use the metric: Direct referral traffic from AI platforms is relatively easy to spot when it’s clearly labeled as coming from tools like ChatGPT or Perplexity. In practice, though, not all AI-driven sessions provide clean referral data.

Because of that, I treat AI referral traffic as a supporting signal rather than a success metric in its own right. I look at it alongside assisted conversions and branded search lift to understand its true influence, rather than expecting clean last-click attribution.

How to track: Use CRM and analytics platforms (e.g., HubSpot, GA4) to identify sessions coming from AI tools like ChatGPT or Perplexity. Because not all AI traffic passes proper referral data, treat this as a directional metric alongside assisted conversions and branded search lift.

Pro tip: Create custom source groupings in HubSpot reporting to isolate known AI referrers and evaluate their influence across the full funnel. Pair this with HubSpot AEO’s Prompt Tracking to understand which prompts are driving citations. This gives teams a leading indicator of where AI referral traffic is likely to come from before it shows up in analytics.

5. AI Share of Voice (AI SoV)

AI Share of Voice measures how often a brand appears relative to competitors across a defined set of prompts. Marketing teams typically track this in two ways:

- Entity-based share of voice. Measures whether a brand appears at all in an AI-generated answer.

- Citation-based share of voice. Tracks how often a brand is explicitly cited or referenced.

Together, these views show which brands’ AI engines trust and rely on to generate an answer.

What the Experts Say: “AI share of voice shows how many times you come up versus your competitors for the prompts,” Frunze explained. “It helps put things in perspective.”

How I use the metric: This is the first GEO KPI I look at when diagnosing AI visibility. If competitors dominate AI responses to high-intent prompts, it usually indicates that the brand I’m working with has positioning or authority gaps.

How to track: Compare a brand’s presence versus competitors across a defined set of AI prompts using tools like XFunnel or Superlines. Track both entity-based and citation-based appearances to understand relative AI trust and authority.

Pro tip: Use XFunnel to measure AI visibility and share of voice across LLMs. Pair this data with KPI dashboards to contextualize AI exposure alongside pipeline and revenue metrics.

6. AI-Driven Leads

AI-driven leads measure conversions influenced by AI discovery, particularly for bottom-of-funnel queries such as competitor comparisons, alternatives, and integrations. This metric is most valuable for understanding how AI visibility appears in the pipeline, as these interactions typically come from buyers who are close to making a purchase decision.

What the Experts Say: Frunze mentioned, “The content that drives AI leads the most is bottom-of-funnel content. These prompts usually come from people already evaluating options and are past the awareness stage.”

How I use the metric: I use AI-driven leads to understand whether GEO work is contributing to revenue, not just visibility. I review form fills and deal creation alongside high-intent pages like comparisons, alternatives, and integrations.

Within those forms, I look for explicit references to ChatGPT, Perplexity, or Gemini. Sometimes, I ask customers where they first heard about the brand.

How to track: Connect AI referral data with lead tracking in the CRM to quantify conversions originating from AI interactions. Use UTM parameters or platform-specific identifiers to measure downstream impact on pipeline and revenue.

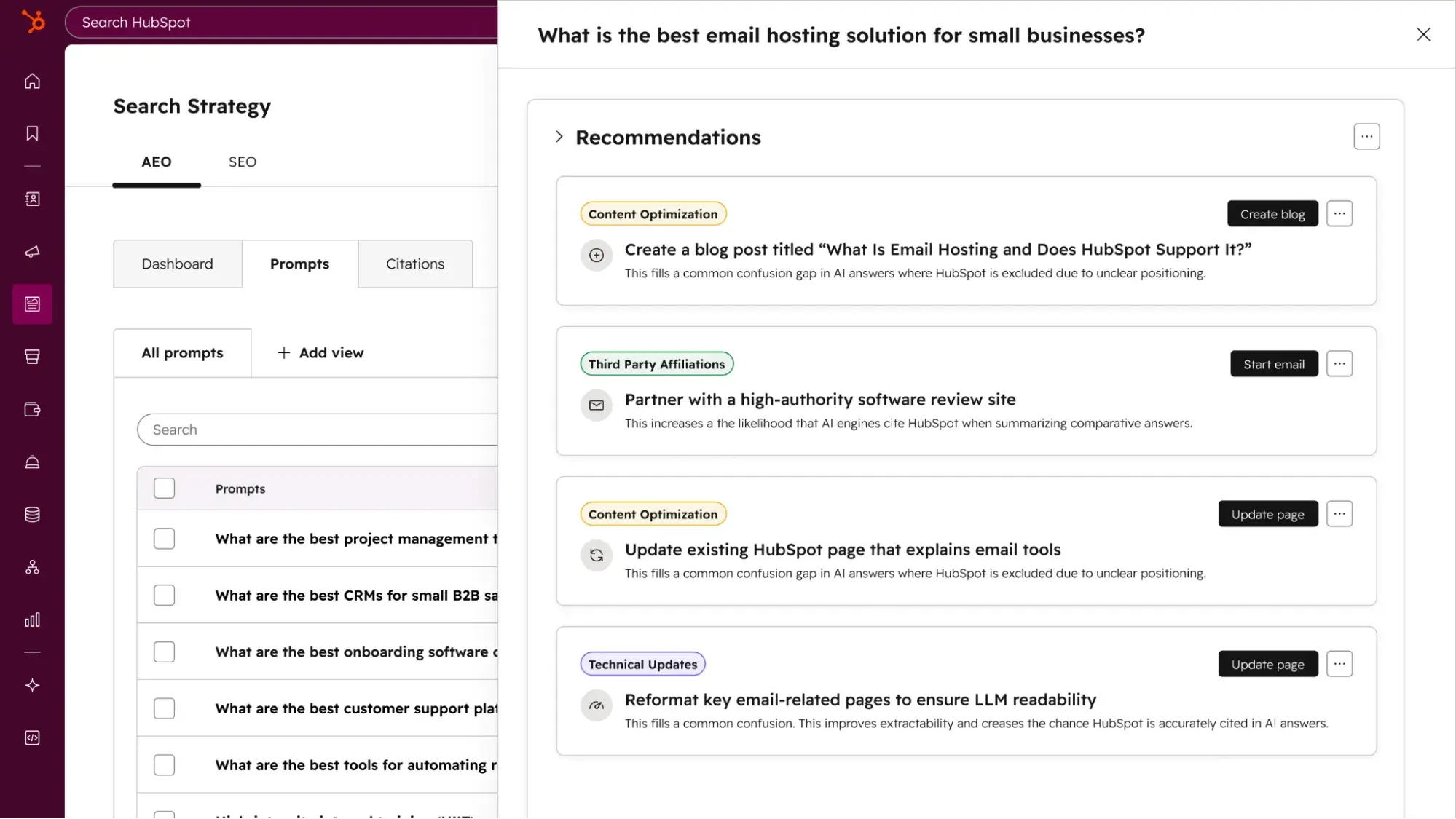

Pro tip: Track AI-influenced form fills and deal creation inside HubSpot CRM to understand how generative search contributes to the pipeline, even when attribution isn’t linear. Use HubSpot AEO’s Recommendations feature to prioritize which visibility gaps to close first. Each recommendation includes a full content brief tied to the bottom-of-funnel prompts most likely to drive AI-referred leads.

Quick Overview: SEO KPIs vs GEO KPIs

Best Tools to Monitor GEO KPIs Across AI Platforms

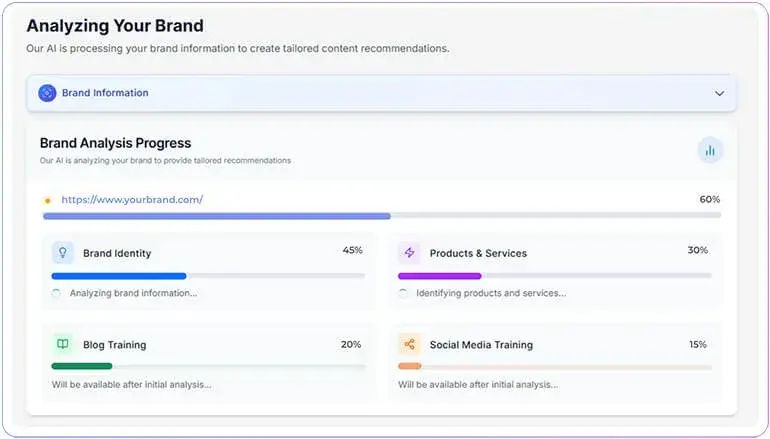

1. HubSpot AEO

HubSpot AEO tracks and improves how a brand appears across major answer engines, including ChatGPT, Perplexity, and Gemini. HubSpot AEO directly measures core GEO KPIs, from citation frequency and AI share of voice to prompt-level prominence and sentiment.

Unlike tools that focus on a single metric or require stitching together data from multiple sources, HubSpot AEO centralizes GEO measurement in a single dashboard. This makes it possible to track performance consistently over time and connect visibility shifts directly to content and strategy changes.

Key Features:

- Brand visibility dashboard. Tracks answer inclusion rate across answer engines, showing how often the brand appears in AI-generated answers for priority prompts and how that score shifts over time

- Competitor analysis. Powers AI share of voice measurement, showing relative presence versus competitors across the same prompt set, so teams can identify where they’re gaining or losing ground

- Prompt tracking and suggestions. Monitors answer prominence and positioning at the prompt level, including which prompts cite the brand, which cite competitors instead, and where the brand is completely absent.

- Citation analysis. Surfaces which domains, content types, and source channels AI engines are pulling from when answering prompts in the category

- Sentiment analysis. Measures how positively or negatively the brand is described in AI-generated responses on a scale from -100% to +100%, giving teams an early signal of entity authority issues alongside visibility gaps

- Recommendations. Turns visibility and citation data into a prioritized action plan, with full content briefs for each recommendation so teams know exactly what to create or change to move the needle on GEO KPIs

Best for:

- Marketing teams that need a single dashboard to track GEO KPIs consistently over time

- Brands that want to connect AI visibility to pipeline and revenue outcomes without managing multiple tools

- Teams reporting AI performance to leadership who need clear, comparable data across answer engines

Pricing: Available in Marketing Hub Pro and Enterprise, or as a dedicated tool for $50/month without a HubSpot subscription.

What I like: Most GEO KPI tracking requires a combination of manual testing, spreadsheet tracking, and disconnected tools. HubSpot AEO brings the core metrics into one place so teams can monitor performance consistently rather than episodically. The centralized dashboard makes it significantly easier to show directional movement over time and connect AI visibility to pipeline outcomes.

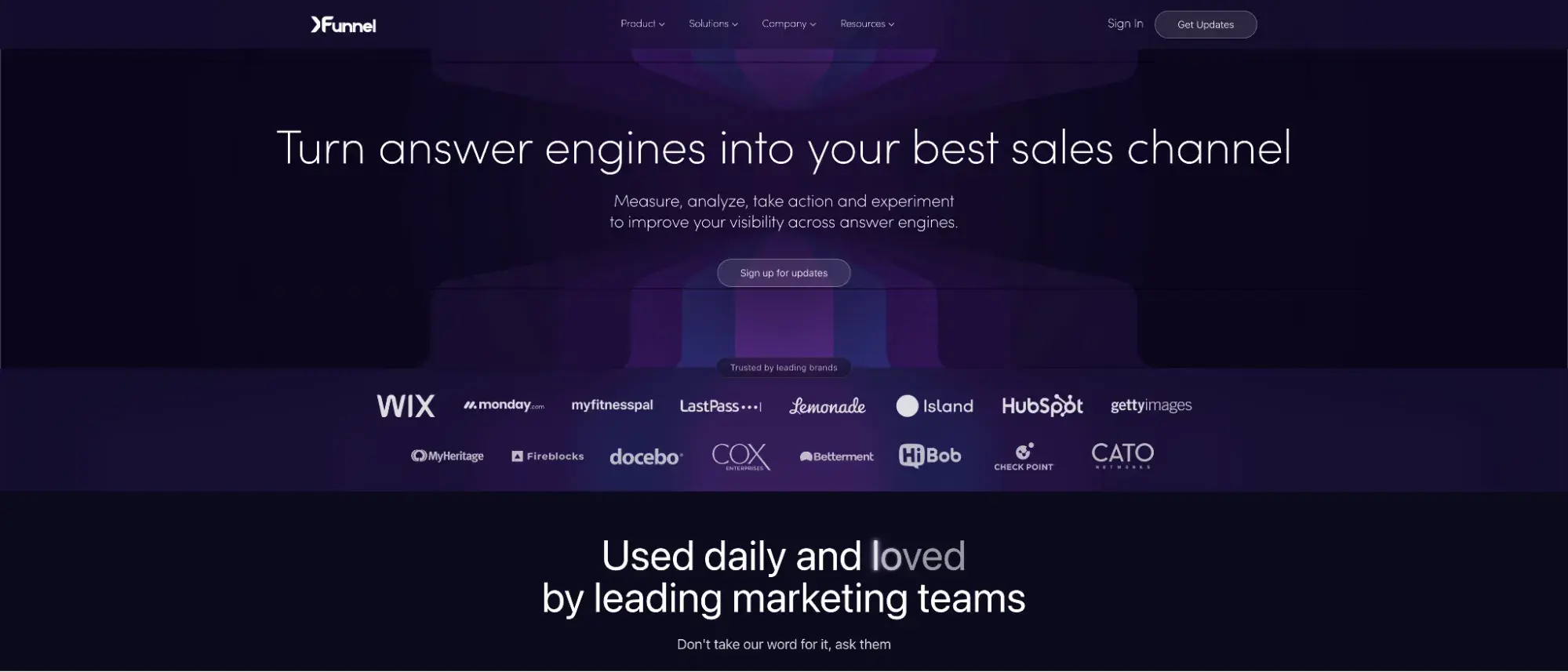

2. XFunnel

XFunnel measures how brands appear in AI-generated responses from large language models by analyzing AI share of voice, citations, and entity mentions. Instead of relying on traffic as a proxy, this shows how AI engines actually surface and describe brands in response to real user prompts. XFunnel helps teams answer questions traditional analytics can’t, like:

- Which brands are being named most often for high-intent prompts?

- Are we included at all, or consistently excluded?

- When we do appear, are we cited, summarized, or just listed?

Most GEO KPIs require direct observation of AI responses. Xfunnel does that at scale. It gives marketing teams a way to move beyond anecdotal testing and understand competitive positioning inside AI search in a repeatable, measurable way.

Best for:

- Marketing teams tracking AI share of voice and competitive visibility.

- Brands operating in crowded categories where being “on the list” matters.

- Leaders who need to explain AI performance without relying on traffic alone.

Pricing: Pricing varies based on usage, prompt volume, and reporting depth.

What I like: XFunnel focuses on answer-level visibility, not just referral traffic. That aligns with how generative search works today: influence often occurs without a click.

I also like that it separates entity-based visibility from citation-based visibility, which maps directly to the GEO KPIs teams need to report on.

Seeing how often competitors appear — and in what context — makes it easier to prioritize content updates and address authority gaps.

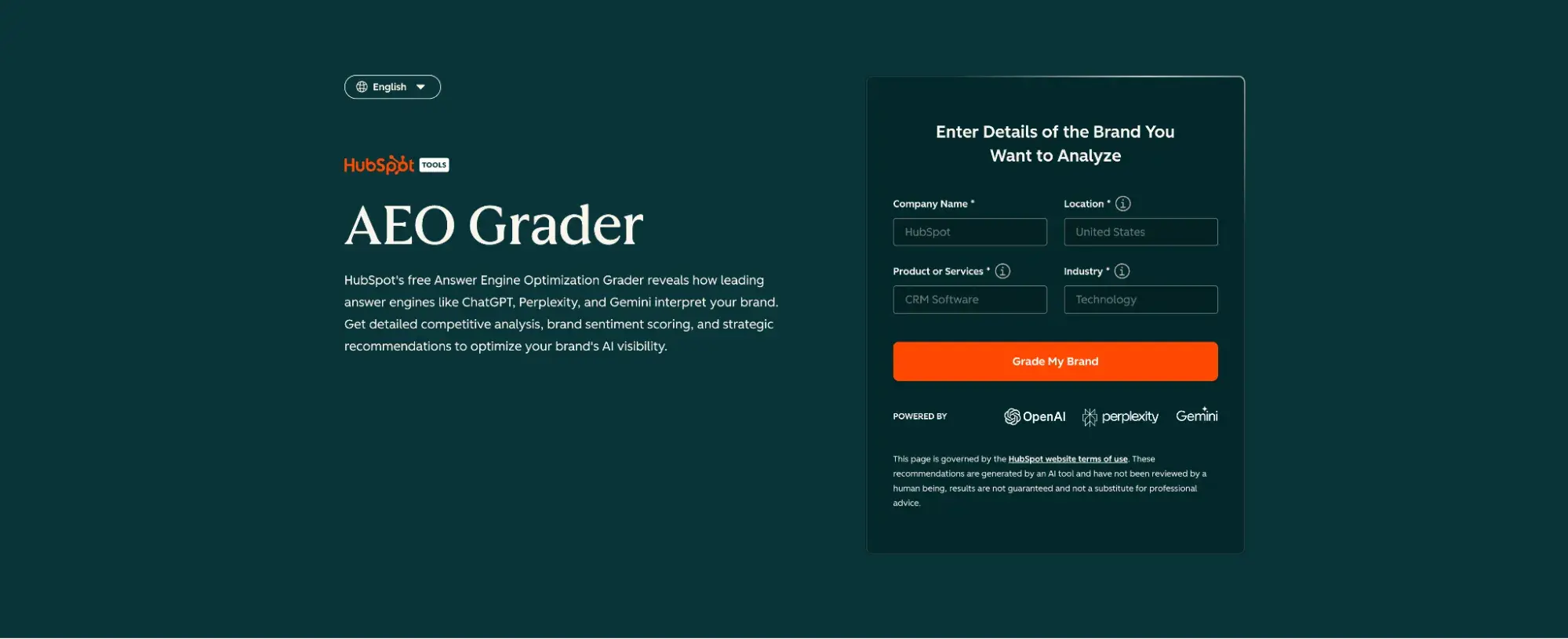

3. HubSpot’s AEO Grader

HubSpot’s AEO Grader is a free tool that evaluates how well a site is structured for AI and answer engines. It focuses on foundational elements — such as schema implementation, page structure, and content clarity — that influence how AI systems interpret and surface information.

The AEO Grader helps surface structural gaps that directly affect GEO KPIs. For teams just getting started, it provides a fast way to identify technical and structural blockers before investing in deeper optimization work.

Best for:

- Teams auditing AI readiness without committing to new tooling.

- Marketers validating whether schema and structure are implemented correctly.

- Organizations that want to identify technical and structural blockers before investing in deeper AEO optimization work.

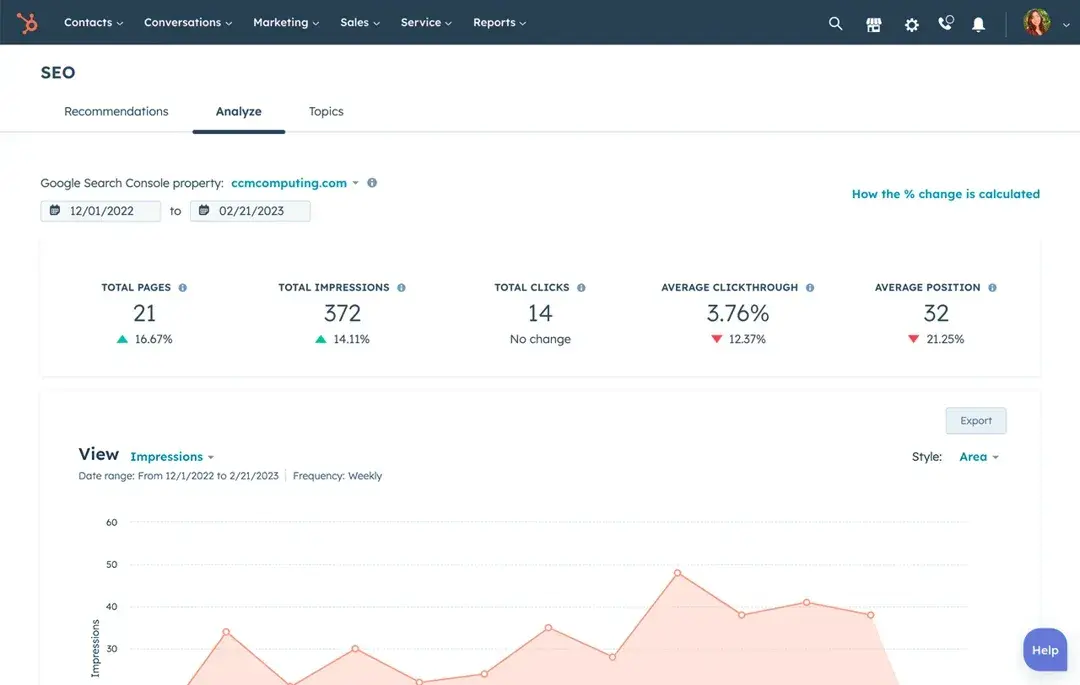

4. HubSpot’s SEO Marketing Software

HubSpot’s SEO Marketing Software helps teams plan and measure content performance through topic clustering, on-page recommendations, and integrated performance reporting.

While built for traditional search, the same signals matter for AI engines. Topic clusters reinforce entity authority by clarifying what a brand is about and which pages should be treated as primary sources, while on-page recommendations support clear structure and semantic alignment.

Best for:

- Teams that want SEO and GEO measurement in one platform.

- Marketing leaders who need to tie content performance to the pipeline and revenue.

- Organizations standardizing content structure and topical authority across teams.

What I like: I like that HubSpot’s SEO Marketing Software doesn’t live in a vacuum. Instead of pulling SEO data from one tool, AI visibility from another, and revenue data from a third, HubSpot allows teams to connect content performance to pipeline outcomes in a single system.

I also find topic clustering especially useful for GEO because it forces teams to be explicit about core themes, which is what AI engines reward when deciding which sources to trust.

5. HubSpot’s Content Hub

HubSpot’s Content Hub is a CMS designed to help teams create, manage, and optimize content with built-in SEO guidance and support for structured, schema-ready publishing. It allows marketers to standardize how content is written, organized, and maintained across the site.

For GEO, structure matters as much as substance, because AI engines rely on clearly organized content to understand what a page is about and when it should be reused in an answer.

Content Hub supports this by encouraging clean page structure. Teams can implement the schema and structured data that help AI engines interpret key information more accurately.

What I like: Content Hub makes it easier to operationalize effective content writing habits at scale. Instead of relying on individual writers to remember schema rules or formatting best practices, the CMS itself nudges teams toward consistency.

Best for:

- Teams publishing content for both humans and AI systems.

- Organizations standardizing content structure across multiple contributors.

- Marketers who want schema-ready content without custom development work.

6. Addlly AI

Addlly AI is a platform that combines GEO auditing with AI-driven optimization to show how brands appear in AI-generated responses across multiple large language models. It tracks citations, mentions, and AI share of voice, giving teams a clear view of where their content is being surfaced or ignored by generative engines.

Addlly AI GEO Agent goes beyond reporting by helping teams take action: It identifies visibility gaps, generates AI-optimized content, and structures information in a way that increases the likelihood of being cited by AI. Teams can see not just whether they appear, but how they appear — summarized, cited, or listed — across different AI platforms.

Best for:

- Marketing teams that want end-to-end AI visibility tracking and optimization.

- Brands operating in competitive categories where being cited or summarized matters.

- Teams that want to move beyond traffic-based metrics to understand real AI-driven influence.

Pricing: Flexible, based on audit depth, prompt volume, and AI content generation usage.

What I like: Addlly integrates diagnostics and execution, so teams don’t just get a snapshot of visibility — they get the tools to improve it. It also separates entity mentions from citations, which aligns perfectly with the GEO KPIs teams need to measure. Seeing where competitors appear and in what context makes prioritizing content updates much more strategic.

7. Superlines

Superlines is an AI search intelligence platform that measures how brands appear in generative AI responses across platforms like ChatGPT, Perplexity, Gemini, Claude, and more. It focuses on answer-level visibility, tracking brand mentions, citations, sentiment, and competitive share of voice in real user-facing AI outputs.

Rather than relying on search traffic or generic rankings, Superlines gives teams direct observation of AI responses, showing exactly where and how a brand is included or excluded. This makes it possible to benchmark against competitors, identify content authority gaps, and prioritize updates strategically.

Best for:

- Marketing teams tracking AI share of voice and multi-platform visibility.

- Brands in highly competitive categories where answer-level inclusion matters.

- Teams that need a measurable way to show AI influence without relying on clicks.

Pricing: Based on platform coverage, reporting frequency, and team scale.

What I like: Superlines emphasizes real, user-facing AI visibility instead of indirect metrics. It captures multi-platform AI outputs at scale, giving teams repeatable insights for competitive positioning. Its combination of citation and context tracking maps directly to GEO KPIs that matter for reporting.

Common GEO Measurement Challenges and How to Solve Them

As teams adopt generative engine optimization, they often run into measurement challenges that don’t exist in traditional SEO. Many of these issues stem from how AI platforms surface answers, limit attribution, and distribute influence across channels.

Below are the most common GEO measurement challenges, followed by practical ways to address them based on real-world experience.

1. Limited AI Referral Data

The challenge: Many AI platforms suppress or delay referral data, making it difficult to attribute website sessions or conversions to a specific AI source within analytics and CRM systems.

My experience: In analytics dashboards, I’ve repeatedly seen what appear to be “ghost” referrals — sessions that lead to sign-ups, form fills, or deals, but aren’t tied to a clear referring engine. The engagement is real, but the source attribution is incomplete.

How to solve it: The goal is to understand influence, not just clicks. Instead of relying solely on referral data, look for additional signals. That includes:

- Reviewing form responses for mentions of ChatGPT, Perplexity, or Gemini.

- Asking prospects directly how they first heard about the brand.

- Monitoring citations or mentions in places that don’t surface cleanly in analytics.

2. KPI Overload

The challenge: GEO introduces a wide range of potential metrics, and tracking too many at once can create KPI reporting noise that obscures meaningful insights.

My experience: I’ve seen teams struggle when they try to monitor every possible GEO KPI simultaneously. Reporting becomes harder to explain, and optimization efforts lose focus.

How to solve it: I recommend choosing one or two KPIs that the team can actively influence in the near term. The remaining metrics can stay on the back burner. I’ve found that building a deep understanding of a small set of signals creates far more progress than shallow tracking across dozens of indicators.

3. Tool Fragmentation

The challenge: GEO data is often spread across SEO platforms, AI visibility tools, analytics software, and CRM systems, making it difficult to form a cohesive view of performance.

My experience: I’ve seen teams invest in GEO tools that don’t deliver actionable insights. Not every platform that claims to measure AI visibility is worth the investment.

How to solve it: The most effective approach is to combine answer-level visibility tools with centralized reporting. Xfunnel is useful here because it focuses on how brands appear inside AI-generated answers, rather than relying on traffic proxies. Pairing that insight with HubSpot reporting reduces fragmentation and increases confidence in the data.

4. Executive Skepticism

The challenge: Leadership teams may question GEO metrics because they lack familiar benchmarks and long-established reporting standards.

My experience: As a fractional content strategist working with C-suite leaders, I’ve encountered skepticism around whether GEO is worth the effort. Some leaders lean heavily on the idea that “good SEO is good GEO,” and many leaders are hesitant to adjust existing processes.

How to solve it: Competitive framing helps. Tracking AI share of voice for a short period and comparing it against competitors quickly shows where influence is being gained or lost inside AI-generated answers. Once leaders see that gap, the value of GEO metrics becomes much easier to justify.

5. Measuring Influence Without Clicks

The challenge: AI-generated answers don’t always result in immediate website visits, making traditional traffic-based performance indicators incomplete.

My experience: I’ve seen GEO improvements show up well before any noticeable lift in sessions or before traditional ranking catches up. If teams rely only on clicks, they risk missing early indicators of impact.

How to solve it: Look beyond last-click attribution and monitor branded search lift, assisted conversions, and downstream deal creation over time. GEO influence often appears later in the funnel, not always at the moment of discovery.

Frequently Asked Questions About GEO KPIs

How often should you report GEO KPIs to executives?

Monthly reporting works best for GEO KPIs because it allows teams to identify directional trends without overreacting to short-term volatility in AI-generated answers. AI visibility can fluctuate week to week as models refresh, prompts shift, or competitors publish new content, so a monthly cadence helps smooth out noise and surface meaningful movement.

Quarterly reviews are where GEO KPIs should be tied back to pipeline, revenue, and competitive positioning. Framing GEO performance alongside existing business reviews helps normalize it within the growth conversation rather than treating it as a standalone experiment.

What is the simplest way to tag AI-referral traffic in analytics and CRM?

The simplest approach is to start with custom source groupings inside HubSpot that capture known AI referrers such as ChatGPT, Perplexity, and Gemini. While not all AI platforms pass clean referral data, grouping what is visible creates a baseline signal.

From there, campaign parameters and CRM fields can help fill in gaps. For example, adding a short “How did you hear about us?” field to high-intent forms often surfaces AI discovery even when analytics does not. Over time, these signals combine to form a clearer picture of AI influence across the funnel.

How do you prioritize content updates to improve GEO KPIs?

The highest-impact updates usually start with prompt-level visibility, not page-level performance. Prioritize content tied to prompts where competitors already appear in AI-generated answers, especially for comparison, alternative, or evaluation-style queries.

From there, look for gaps, such as unclear positioning, outdated language, weak structure, or missing context that would help an AI engine understand why the brand belongs in the answer. Updating those pages with stronger differentiation and better structure tends to produce faster GEO gains than publishing entirely new content from scratch.

When should you consider new GEO KPIs versus optimizing existing ones?

New GEO KPIs should only be introduced when existing metrics no longer explain what’s happening. If current KPIs still help answer questions about visibility, competition, and revenue influence, adding more metrics usually creates confusion rather than clarity.

New KPIs should serve strategy, not expand dashboards.

Turning GEO KPIs Into a Competitive Advantage

Generative engine optimization KPIs give marketing teams visibility into a part of the buyer journey that traditional analytics can’t fully explain. By tracking citations, entity authority, prompt inclusion, and AI-driven influence, teams gain a clearer picture of how their brand performs inside modern search experiences.

From what I’ve seen, the teams that win with GEO measurement are the ones that integrate AI visibility into existing systems, rather than treating it as a side experiment. Tools such as HubSpot AEO enable that integration without adding unnecessary complexity.

As AI-powered discovery becomes the default, GEO KPIs won’t be optional. They’ll be how confident marketing leaders explain performance, defend strategy, and prove impact, even when the click never comes.

Editor’s note: This post was originally published in January 2025 and has been updated for comprehensiveness.

Post Comment